SLAM是什么?

SLAM(Simultaneous Localization and Mapping)同时定位与建图,是机器人在未知环境中自主导航的关键技术。

SLAM的挑战

想象你被蒙上眼睛放入一个陌生房间:

- 定位:我在哪里?

- 建图:周围有什么?

- 同时性:没有地图无法定位,不知道位置无法建图

这就是”鸡生蛋蛋生鸡”的SLAM问题。

SLAM的分类

按传感器分类

激光SLAM

- 传感器:2D/3D激光雷达

- 优点:精度高,不受光照影响

- 缺点:价格贵,数据量大

- 应用:扫地机器人、自动驾驶

视觉SLAM

- 传感器:单目/双目/RGB-D相机

- 优点:成本低,信息丰富

- 缺点:受光照影响,计算量大

- 应用:AR/VR、无人机

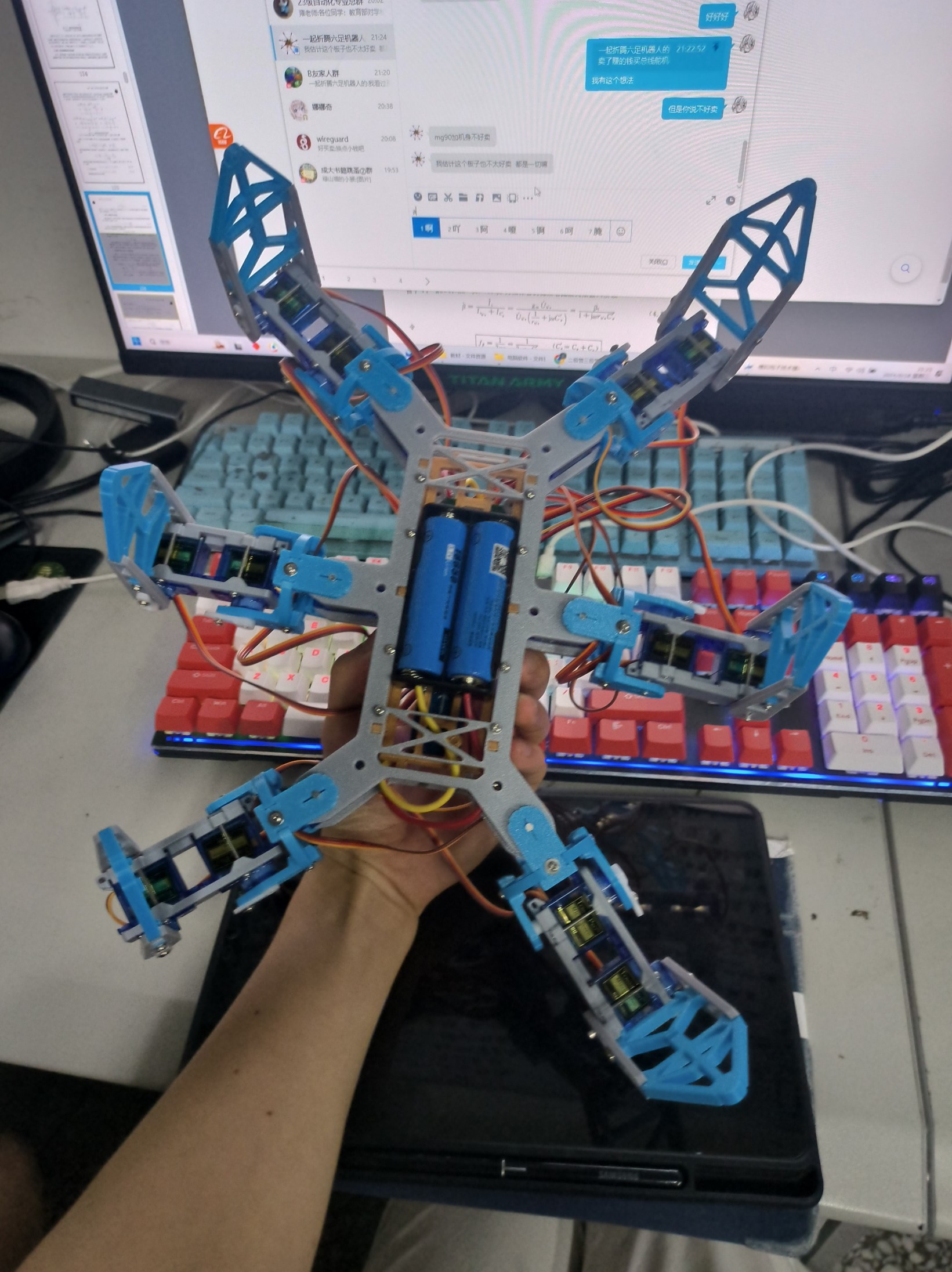

融合SLAM

- 传感器:激光+视觉+IMU

- 优点:精度高,鲁棒性强

- 应用:高端机器人

按环境分类

- 2D SLAM:平面环境

- 3D SLAM:三维空间

SLAM框架

前端(Frontend)

作用:处理传感器数据,提取特征

主要任务:

- 特征提取

- 数据关联

- 位姿估计

后端(Backend)

作用:优化地图和位姿

主要任务:

- 图优化

- 滤波

- 闭环检测

回环检测(Loop Closure)

识别曾经到过的地方,消除累积误差。

激光SLAM基础

Gmapping算法

基于粒子滤波的2D SLAM:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

| import rospy

from nav_msgs.msg import OccupancyGrid

from sensor_msgs.msg import LaserScan

class GmappingSLAM:

def __init__(self):

self.particles = []

self.map = OccupancyGrid()

rospy.Subscriber('/scan', LaserScan, self.scan_callback)

def scan_callback(self, scan):

self.predict(odometry)

self.update_weights(scan)

self.resample()

self.update_map(scan)

|

Cartographer

Google开源的SLAM方案,使用子图优化:

1

2

3

4

5

6

7

8

9

10

11

12

|

TRAJECTORY_BUILDER_2D = {

use_imu_data = true,

min_range = 0.3,

max_range = 30.0,

ceres_scan_matcher = {

translation_weight = 10.0,

rotation_weight = 1.0,

},

}

|

视觉SLAM基础

ORB-SLAM2

经典的单目SLAM方案:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

|

class System {

public:

void TrackMonocular(cv::Mat &im, double timestamp) {

vector<cv::KeyPoint> vKeys;

cv::Mat mDescriptors;

mpORBextractor->operator()(im, vKeys, mDescriptors);

if (mState == OK) {

TrackWithMotionModel();

}

TrackLocalMap();

if (NeedNewKeyFrame()) {

CreateNewKeyFrame();

}

}

};

|

VINS-Mono

单目视觉-惯性SLAM:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

|

void FeatureTracker::readImage(const cv::Mat &_img) {

cv::Mat img = _img;

vector<uchar> status;

vector<float> err;

cv::calcOpticalFlowPyrLK(

prev_img, img,

prev_pts, cur_pts,

status, err

);

cv::goodFeaturesToTrack(

img, n_pts,

MAX_CNT - cur_pts.size(),

0.01, MIN_DIST

);

}

|

ROS中使用SLAM

启动Gmapping

1

2

3

4

5

|

rosrun gmapping slam_gmapping \

scan:=/scan \

_base_frame:=base_link \

_map_update_interval:=5.0

|

保存地图

1

2

|

rosrun map_server map_saver -f my_map

|

会生成两个文件:

my_map.pgm:地图图片my_map.yaml:地图元数据

加载地图导航

1

2

|

roslaunch navigation navigation.launch map_file:=my_map.yaml

|

Python实现简单SLAM

基于特征的SLAM

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

| import numpy as np

import cv2

class SimpleSLAM:

def __init__(self):

self.orb = cv2.ORB_create()

self.bf = cv2.BFMatcher(cv2.NORM_HAMMING)

self.trajectory = []

self.map_points = []

def process_frame(self, image):

kp, des = self.orb.detectAndCompute(image, None)

if len(self.trajectory) == 0:

self.last_kp = kp

self.last_des = des

self.trajectory.append(np.eye(4))

return

matches = self.bf.knnMatch(self.last_des, des, k=2)

good_matches = []

for m, n in matches:

if m.distance < 0.75 * n.distance:

good_matches.append(m)

if len(good_matches) > 10:

src_pts = np.float32([

self.last_kp[m.queryIdx].pt for m in good_matches

]).reshape(-1, 1, 2)

dst_pts = np.float32([

kp[m.trainIdx].pt for m in good_matches

]).reshape(-1, 1, 2)

E, mask = cv2.findEssentialMat(

src_pts, dst_pts,

focal=718.856, pp=(607.1928, 185.2157),

method=cv2.RANSAC, prob=0.999, threshold=1.0

)

_, R, t, mask = cv2.recoverPose(E, src_pts, dst_pts)

T = np.eye(4)

T[:3, :3] = R

T[:3, 3] = t.reshape(3)

self.trajectory.append(

self.trajectory[-1] @ T

)

self.last_kp = kp

self.last_des = des

def get_trajectory(self):

return np.array([T[:3, 3] for T in self.trajectory])

|

SLAM评估指标

绝对轨迹误差(ATE)

1

2

3

4

5

6

7

8

9

10

11

12

| def compute_ate(gt_poses, est_poses):

"""

计算绝对轨迹误差

gt_poses: 真实位姿

est_poses: 估计位姿

"""

ate = np.linalg.norm(gt_poses - est_poses, axis=1)

return {

'mean': np.mean(ate),

'rmse': np.sqrt(np.mean(ate**2)),

'std': np.std(ate)

}

|

相对位姿误差(RPE)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

| def compute_rpe(gt_poses, est_poses, delta=1):

"""

计算相对位姿误差

delta: 帧间隔

"""

rpe_trans = []

rpe_rot = []

for i in range(len(gt_poses) - delta):

gt_rel = np.linalg.inv(gt_poses[i]) @ gt_poses[i + delta]

est_rel = np.linalg.inv(est_poses[i]) @ est_poses[i + delta]

error = np.linalg.inv(gt_rel) @ est_rel

rpe_trans.append(np.linalg.norm(error[:3, 3]))

rpe_rot.append(np.arccos(

(np.trace(error[:3, :3]) - 1) / 2

))

return {

'trans_mean': np.mean(rpe_trans),

'rot_mean': np.rad2deg(np.mean(rpe_rot))

}

|

常用数据集

TUM RGB-D Dataset

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

|

def load_tum_dataset(path):

rgb_files = sorted(glob.glob(f"{path}/rgb/*.png"))

depth_files = sorted(glob.glob(f"{path}/depth/*.png"))

with open(f"{path}/groundtruth.txt") as f:

lines = f.readlines()

gt_poses = []

for line in lines:

if line[0] == '#':

continue

data = [float(x) for x in line.split()]

gt_poses.append(data)

return rgb_files, depth_files, gt_poses

|

KITTI Dataset

自动驾驶场景,提供激光雷达、相机等多传感器数据。

实战:基于ROS的导航

1. 建图

1

2

3

4

5

6

7

8

9

10

11

|

roslaunch robot bringup.launch

roslaunch robot gmapping.launch

roslaunch robot teleop.launch

rosrun map_server map_saver -f office_map

|

2. 导航

1

2

3

4

5

6

7

8

|

roslaunch robot navigation.launch

|

3. Python控制导航

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

| import rospy

from move_base_msgs.msg import MoveBaseAction, MoveBaseGoal

import actionlib

def navigate_to_goal(x, y, theta):

client = actionlib.SimpleActionClient('move_base', MoveBaseAction)

client.wait_for_server()

goal = MoveBaseGoal()

goal.target_pose.header.frame_id = "map"

goal.target_pose.header.stamp = rospy.Time.now()

goal.target_pose.pose.position.x = x

goal.target_pose.pose.position.y = y

goal.target_pose.pose.orientation.w = np.cos(theta / 2)

goal.target_pose.pose.orientation.z = np.sin(theta / 2)

client.send_goal(goal)

client.wait_for_result()

return client.get_result()

rospy.init_node('navigator')

result = navigate_to_goal(2.0, 1.0, 0)

|

进阶话题

1. 多传感器融合

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

|

class SensorFusion {

void predict(IMU imu_data) {

x_pred = F * x + B * u;

P_pred = F * P * F.transpose() + Q;

}

void update(LaserScan scan) {

K = P_pred * H.transpose() * (H * P_pred * H.transpose() + R).inverse();

x = x_pred + K * (z - H * x_pred);

P = (I - K * H) * P_pred;

}

};

|

2. 动态环境SLAM

处理移动物体,避免干扰建图。

3. 语义SLAM

结合深度学习,理解场景语义。

常见问题

1. 建图漂移

2. 特征匹配失败

3. 计算资源不足

总结

SLAM是机器人自主导航的核心,涉及:

- 数学基础(线性代数、概率论、优化)

- 传感器原理

- 算法实现

建议学习路径:

- 掌握数学基础

- 学习经典算法(EKF-SLAM、粒子滤波)

- 实践开源项目(ORB-SLAM2、Cartographer)

- 参加比赛积累经验

推荐资源: